Taming the vibes 🐍

A day vibe-coding, as a break from the normal routine of study. Done in new environment with language I’ve not used - VS Code extension in TypeScript .

A day vibe-coding, as a break from the normal routine of study. Done in new environment with language I’ve not used - VS Code extension in TypeScript .

New theme that - looks crisp and is easily to read the posts 👍 😎

I’ve been dancing around Probability Theory, it’s history, application, and weeding out what is Frequentist from what is Bayesian. The. Relating it to Rational Psychology. I’m not there, getting there, but not there. This isn’t what I do full time but it is what I think about when I’m not working, parenting, or socialising. Thankfully it’s not interesting to friends and family so I get a break from it myself! 😆

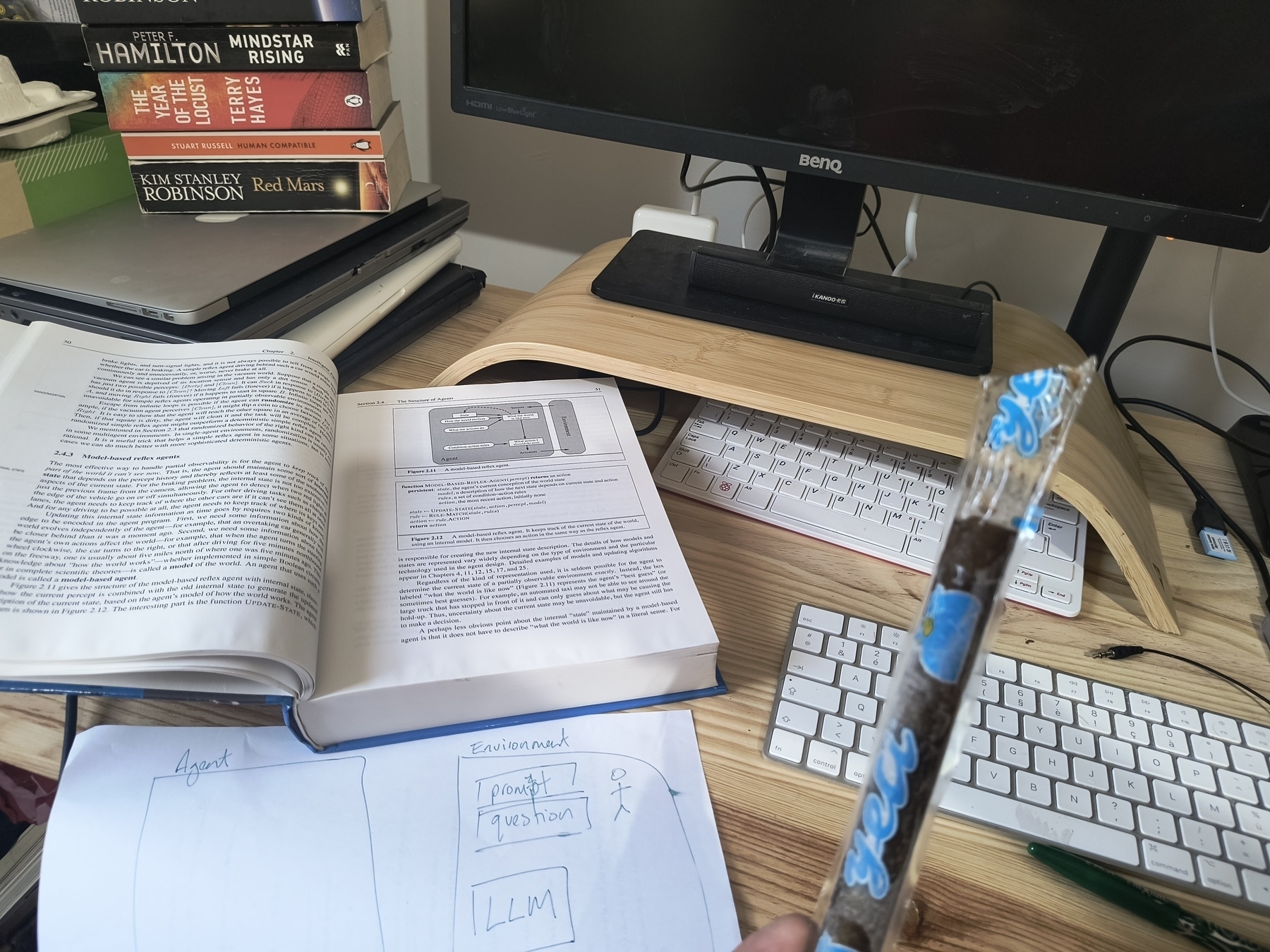

I am very torn between two possibilities : Building on my Q-Learning Maze Solving Agent I did for AI Applications (Q-Learning Maze Solving Agent) by adding a Neural Network (Sutton and Barton) Building on the Intelligent Agents work I did in AI by applying an Agent Decisions Process (Self-Consistency LLM-Agent) (the process is my interpretation of Russell and Norvig’s work) (The Douglas Adam’s extra option 🤓) Adding a cached “self-awareness” layer based on a Bayesian Learning Agent that stores it’s certainty on answers it gives.

A very interesting paper on Critical Thinking in an LLM (or lack thereof) Our study investigates how language models handle multiple-choice questions that have no correct answer among the options. Unlike traditional approaches that include escape options like None of the above (Wang et al., 2024a; Kadavath et al., 2022), we deliberately omit these choices to test the models’ critical thinking abilities. A model demonstrating good judgment should either point out that no correct answer is available or provide the actual correct answer, even when it’s not listed.

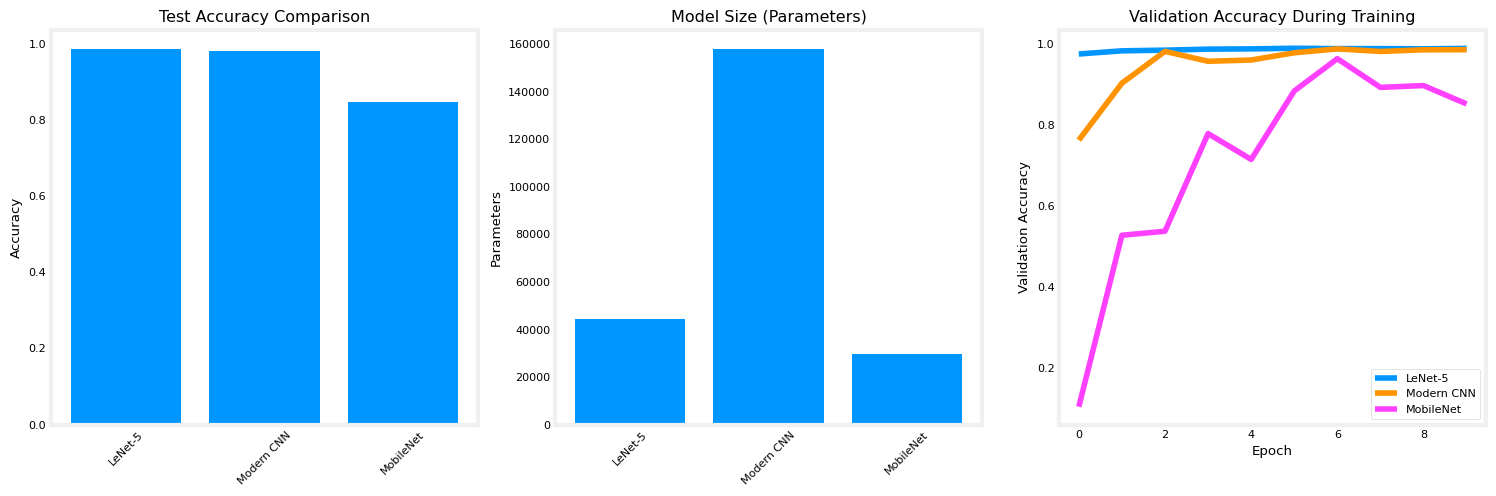

The old ‘un still does the job on the MINST Handwritten dataset !

Ska-en-Provence 🎶🎉

I wish I had time to finish: my research on the Evolution of Probalisitic Reasoning in AI Particularly Dempster-Shafer and Bayesian Networks How LLMs and Bayesian networks can be used for Risk Management create an youtube/insta/tiktok vid for my latest post on LLM Agent But I don’t!! So this is me putting it to one side…

Polish is cheap in this Brave New World of AI. Being scrappy is a way of being authentic and, most importantly, Being Human!

Building a Self-Consistency LLM-Agent: From PEAS Analysis to Production Code - a guide to designing an LLM-based agent.

A refreshing AI-en-Provence 🍦

AI-en-Provence 🤓😂✌🏼✌🏼✌🏼

The article discusses how to implement Bayes Theorem in a learning agent that updates its beliefs about an environment based on new evidence, illustrated through a game involving guessing a number derived from a dice throw.

Reasoning vs Stream of Consciousness - the output of a transformer is not reasoned in the way we think it is.

What is knowledge? Wtf am I trying to learn! Claude “thinks” this post is mental masturbation 😆 well even the physical version serves a good purpose! 🤷🏼♂️

Introduction In the previous post, I shared my view on “Why Study Logic?”, we looked at the Knowledge Representation and highlighted the importance of Logic and Reasoning in storing and accessing Knowledge. In this post I’m going to highlight a section from the book “Introduction to Artificial Intelligence” by Wolfgang Ertel. His approach with this book was to make AI more accessible than Russel and Norvig’s 1000+ page bible. It worked for me.

Introduction The purpose of this article is to help me answer the question “Why am I studying Logic?”. If it helps you, that’d be great, let me know! The question comes from a nagging feeling of, why don’t I see logic used more in the ‘real world’. It could be a personal bias as I more easily see the utility of Rosenblatt’s work, where he looked at both Symbolic Logic and Probability Theory to help solve a problem and choose Probability Theory ([NN Series 1/n] From Neurons to Neural Networks: The Perceptron), with that we had the birth of the Artificial Neuron and the rest is history!

“There must be an invisible sun, giving heat to everyone”

“[IA Series 3/n] Intelligent Agents Term Sheet” breaks down essential AI terminology from Russell & Norvig’s seminal textbook. Learn what makes agents rational (or irrational), understand different agent types, and follow a structured 5-step design process from environment analysis to implementation. Perfect reference for AI practitioners and students. Coming next: how agents mirror human traits. #ArtificialIntelligence #IntelligentAgents #AIDesign