Quantum Computing Explained: The Maze Analogy

interesting video, makes it exciting and relatable…

interesting video, makes it exciting and relatable…

.video-wrapper { position: relative; padding-bottom: 56.25%; height: 0; max-width: 100%; overflow: hidden; background-color: #000; } .video-thumbnail { position: absolute; width: 100%; height: 100%; object-fit: contain; background-color: #000; cursor: pointer; z-index: 1; } .video-overlay { position: absolute; bottom: 0; left: 0; width: 100%; background: rgba(0, 0, 0, 0.85); color: white; text-align: center; font-size: 0.85em; padding: 0.5em 1em; z-index: 2; pointer-events: none; } .video-play-button { position: absolute; top: 50%; left: 50%; transform: translate(-50%, -50%); width: 68px; height: 48px; background-color: rgba(255, 0, 0, 0.

Why do it?

It’s in the writing of this test that you’ll begin making design decisions, but they are primarily interface decisions. Some implementation decisions may leak through, but you’ll get better at avoiding this over time.

It’s been a week and then this DM arrives! Great abstract but what is Flux Matching!?!?

Finished reading: Bear Head by Adrian Tchaikovsky 📚

This was a tough listen, it cuts right through the tech and sci fi and is a story about being human. It covers some of the worst parts of being human.

Tchaikovsky is as insightful as Philip K Dick, instead of heroin and paranoia induced insight he’s got (IIUC) a background in psychology, including animal as well as human and also hits topical issues. Maybe Dick was topical (given the popularity of the genre then I doubt it but 🤷🏼♂️…).

Makes this book a brilliant, worthwhile, if tough listen.

Dogs of War Oh my, what a book. So much more topical than I’d expected, Tchaikovsky captures the psychology of dogs (I trust his interpretation as he studied this to quite a depth) and how it could be mixed in with tech, society, and the human condition. I’ve gone straight into the sequel Bear Head, itself an amazing and more direct representation of the present day challenges we have in society.

Enough load-bearing and pushing back.

Yann LeCunn smaking the nail with a hammer!!

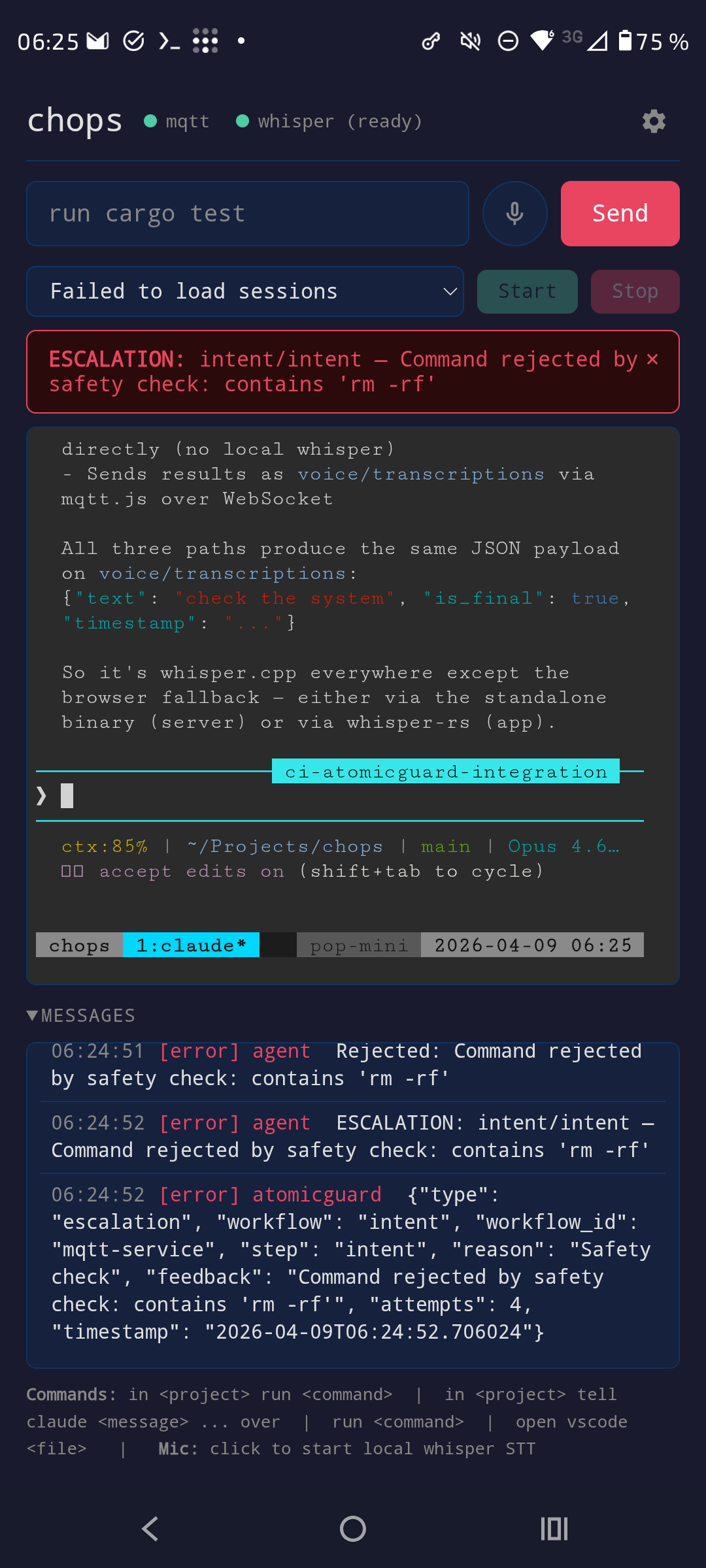

Messy but escalation in play ✌🏼✌🏼✌🏼

In the Spring and Summer of 2025 I had the heady intent of defining Formal Agents, starting with Rationality as this is a term used throughout the domain of AI. With the leak of Claude Code’s code this seems even more topical, seeing prompts like “please do not break the law” is quite disappointing for the leading AI lab. Jonny (good kind) (@jonny@neuromatch.social) a digital infrastructure researcher covers the analysis here.

www.bbc.com/news/arti… youtu.be/JyN-Iqc73… Which is needed given: Sycophantic Chatbots Cause Delusional Spiraling, Even in Ideal Bayesians “AI psychosis” or “delusional spiraling” is an emerging phenomenon where AI chatbot users find themselves dangerously confident in outlandish beliefs after extended chatbot conversations. This phenomenon is typically attributed to AI chatbots' well-documented bias towards validating users' claims, a property often called “sycophancy.” In this paper, we probe the causal link between AI sycophancy and AI-induced psychosis through modeling and simulation.

First part of my view on the value of GenAI to Software Engineering and Maintenance

The weekend was spent organising notes for a final Masters presentation, reflecting on three years of study in LLM and AI in general, with a side of vibe-learning Rust for my Language User Interface intentions.

Seems Deepmind is creating an embedding model to represent the Earth. Which also includes Population Dynamics such as Busyness and Search Terms, with EU regulations I’m OK with that from a personal Data Protection pov, as well as weather predictions, actual, and significant events. This is the first moment I thought that AI can actually help us with the climate.

Discriminative ai (predictive, classification, etc.. Generative ai (create something from a prompt) Dynamic Programming (Value and Policy iteration, RL, Model-based, Model-free) Constraint satisfaction programming (formal methods, planning) Just my 2 cents, maybe too simplic but helping me arrange my thinking. Where does decision making fit? On top of all? Yeah, which means there’s another domain of Information… That is a belief state stored in paper, words, corporate culture, etc…, which inferring over is either intractable or not in place.

There are 5 types of controls in InfoSec: Preventative Detective Corrective Recovery Deterrent Agents are irritating if you don’t give them access, really I don’t want the agent to be able to remove files from git but it finds a way when given the ability to add and commit. So whilst I’m figuring out how to set up solid preventative controls AND not lose my mind with approvals I’ve set up a recovery control.

It’s a trap!

Links I collected last summer on Reasoning - large amount LLM links but it goes past that into what is reasoning and the cognitive link (I missed Active Inference!)

This has been a fun morning of investigation into how I can replace my broken Tello and get into RL for Drone Training 🤓🤓